HANGZHOU, China, Jan. 9, 2025 /PRNewswire/ -- Recently, SpacemiT, a RISC-V AI CPU company from China, announced breakthrough progress in the development of its server CPU chip SpacemiT Vital Stone® V100. It now provides a complete RISC-V CPU chip hardware and software platform that fully supports server specifications.

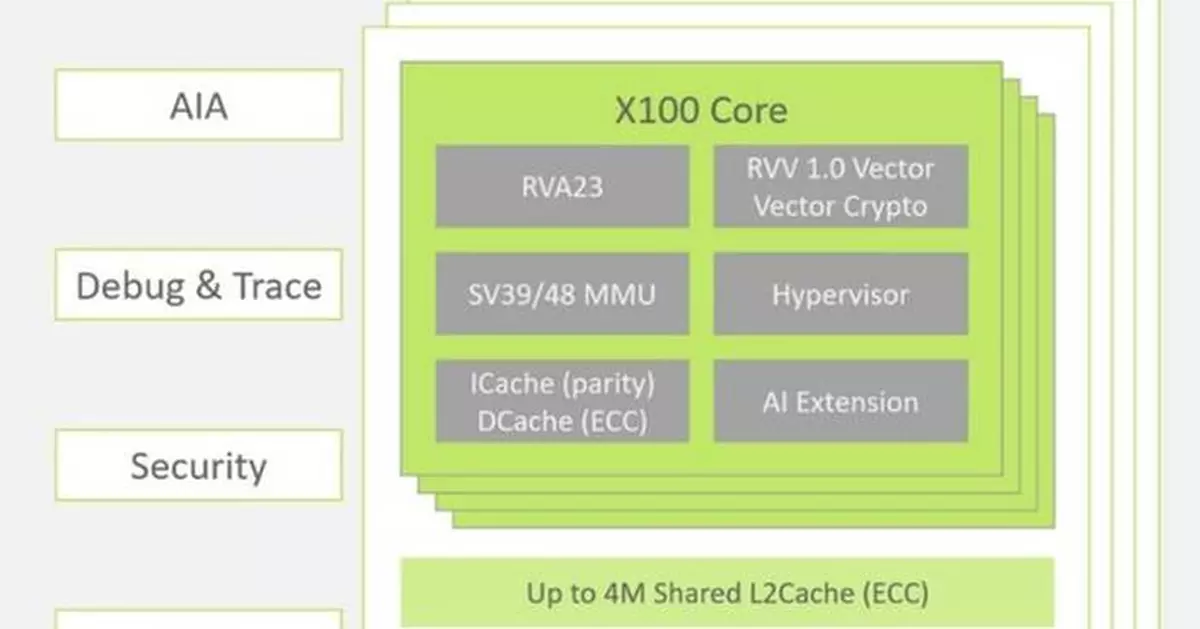

Key IPs:

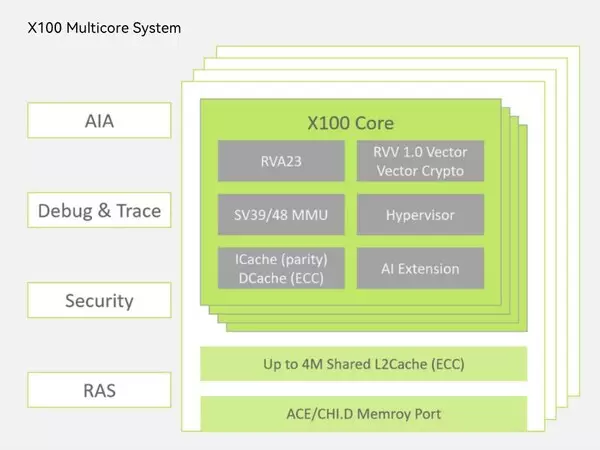

RISC-V CPU core X100, AIA and APLIC supporting interrupt virtualization, IOMMU supporting memory virtualization, IOPMP supporting security functions, LPC and eSPI supporting communication with mainstream BMCs, etc.

- The 64-bit server-grade RISC-V CPU core X100 delivers a single-core performance of >9 points/GHz on SPECINT2006 at 2.5GHz@12nm. X100 supports the RVA23 Profile, full virtualization (Hypervisor 1.0, AIA 1.0, IOMMU), RAS features, Vector 1.0 extension, vector encryption and decryption, security, 64-core interconnect, and more.

- The IOMMU IP adheres to the RISC-V IOMMU architecture specification and the AXI4-Stream DTI interface, supporting configurable DID, PID, virtual address, physical address width, and various levels of translation cache sizes. It can be flexibly integrated into different locations within the SoC bus system to enable distributed peripheral virtualization and accelerator acceleration.

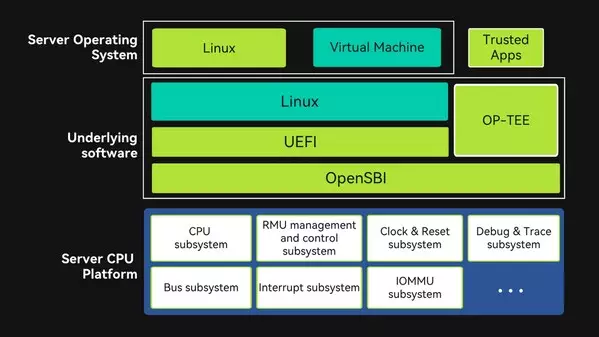

Key Subsystems:

Including CPU subsystem, bus subsystem, IOMMU subsystem, interrupt subsystem, debug & trace subsystem, clock & reset subsystem, RMU management and control subsystem, etc., thereby realizing the development of the server CPU chip platform.

Software R&D Progress:

Based on the self-developed server CPU chip platform, the development of server platform firmware that complies with the RISC-V BRS Spec specification has been completed. This includes openSBI/UEFI (BIOS)/Linux and other low-level software that meets the requirements of the Supervisor Binary Interface (SBI), UEFI (BIOS), SMBIOS, ACPI, and other specifications. The Linux operating system has been adapted and ported, and it supports the GlobalPlatform-standard OP-TEE secure operating system. The platform firmware and operating system can now be successfully run and demonstrated on an FPGA of the server CPU chip platform.

About SpacemiT:

SpacemiT is a computing ecosystem enterprise based on the new-generation RISC-V architecture, with a layout covering full-stack computing technologies such as high-performance RISC-V CPU cores, AI-CPU cores, AI CPU chips, and software systems. It provides end-to-end computing system solutions and is committed to building the best native computing platform for the new AI era of large models using RISC-V AI CPUs, thereby promoting the development of new applications such as AI computers and AI robots. Please visit https://www.spacemit.com/en/ for more information.

Business Contact

business@spacemit.com

Media Contact

media@spacemit.com

** The press release content is from PR Newswire. Bastille Post is not involved in its creation. **

RISC-V Breakthrough: SpacemiT Develops Server CPU Chip V100 for Next-Generation AI Applications

RISC-V Breakthrough: SpacemiT Develops Server CPU Chip V100 for Next-Generation AI Applications