SINGAPORE--(BUSINESS WIRE)--Dec 24, 2025--

Z.ai released GLM-4.7 ahead of Christmas, marking the latest iteration of its GLM large language model family. As open-source models move beyond chat-based applications and into production environments, they are increasingly expected to handle long-running tasks. GLM-4.7 has been developed with these requirements in mind.

This press release features multimedia. View the full release here: https://www.businesswire.com/news/home/20251223393714/en/

Unlike earlier systems focused on single-turn interactions, GLM-4.7 targets development environments that involve longer task cycles, frequent tool use, and higher demands for stability and consistency.

Built on GLM-4.6 with a focus on engineering use

Building on GLM-4.6, GLM-4.7 represents a clear step forward, with a design that leans more firmly toward engineering use. Support for coding workflows, complex reasoning, and agent-style execution has been strengthened, giving the model greater consistency even in long, multi-step tasks, as well as more stable behaviour when interacting with external tools. For developers, this translates into something practical: a model that can be used in everyday engineering work with greater confidence.

The improvements extend beyond technical performance. In conversational, writing, and role-playing settings, GLM-4.7 produces output that is more natural and economical, with a tone closer to everyday communication. Together, these changes point to GLM evolving into a more coherent open-source system, rather than a loose collection of related models. In an ecosystem where many projects remain fragmented or narrowly scoped, that coherence stands out.

Designed for real development workflows

As artificial-intelligence systems move beyond chat-centred applications, developers are facing a new set of challenges, and expectations for model quality have become more exacting. A capable model must do more than understand requirements or follow structured plans. It also needs to call external tools correctly and remain consistent across long, multi-step tasks. As task cycles lengthen, even minor errors can accumulate quickly, driving up debugging costs and stretching delivery timelines. GLM-4.7 was trained and evaluated with these real-world constraints in mind.

In multi-language programming and terminal-based agent environments, the model shows greater stability across extended workflows. It already supports “think-then-act” execution patterns within widely used coding frameworks such as Claude Code, Cline, Roo Code, TRAE and Kilo Code, aligning more closely with how developers approach complex tasks in practice.

Z.ai evaluated GLM-4.7 on 100 real programming tasks in a Claude Code-based development environment, covering frontend, backend and instruction-following scenarios. Compared with GLM-4.6, the new model delivers clear gains in task completion rates and behavioural consistency. This reduces the need for repeated prompt adjustments and allows developers to focus more directly on delivery. On the basis of these results, GLM-4.7 has been selected as the default model for the GLM Coding Plan.

Reliable performance across tool use and coding benchmarks

Across a range of public benchmarks related to code generation and tool use, GLM-4.7 delivers solid and competitive overall performance. On BrowseComp, a benchmark focused on web-based tasks, the model scores 67.5. On τ²-Bench, which evaluates interactive tool use, GLM-4.7 achieves a score of 87.4, the highest reported result among publicly available open-source models to date.

In major programming benchmarks including SWE-bench Verified, LiveCodeBench v6, and Terminal Bench 2.0, GLM-4.7 performs at or above the level of Claude Sonnet 4.5, while showing clear improvements over GLM-4.6 across multiple dimensions.

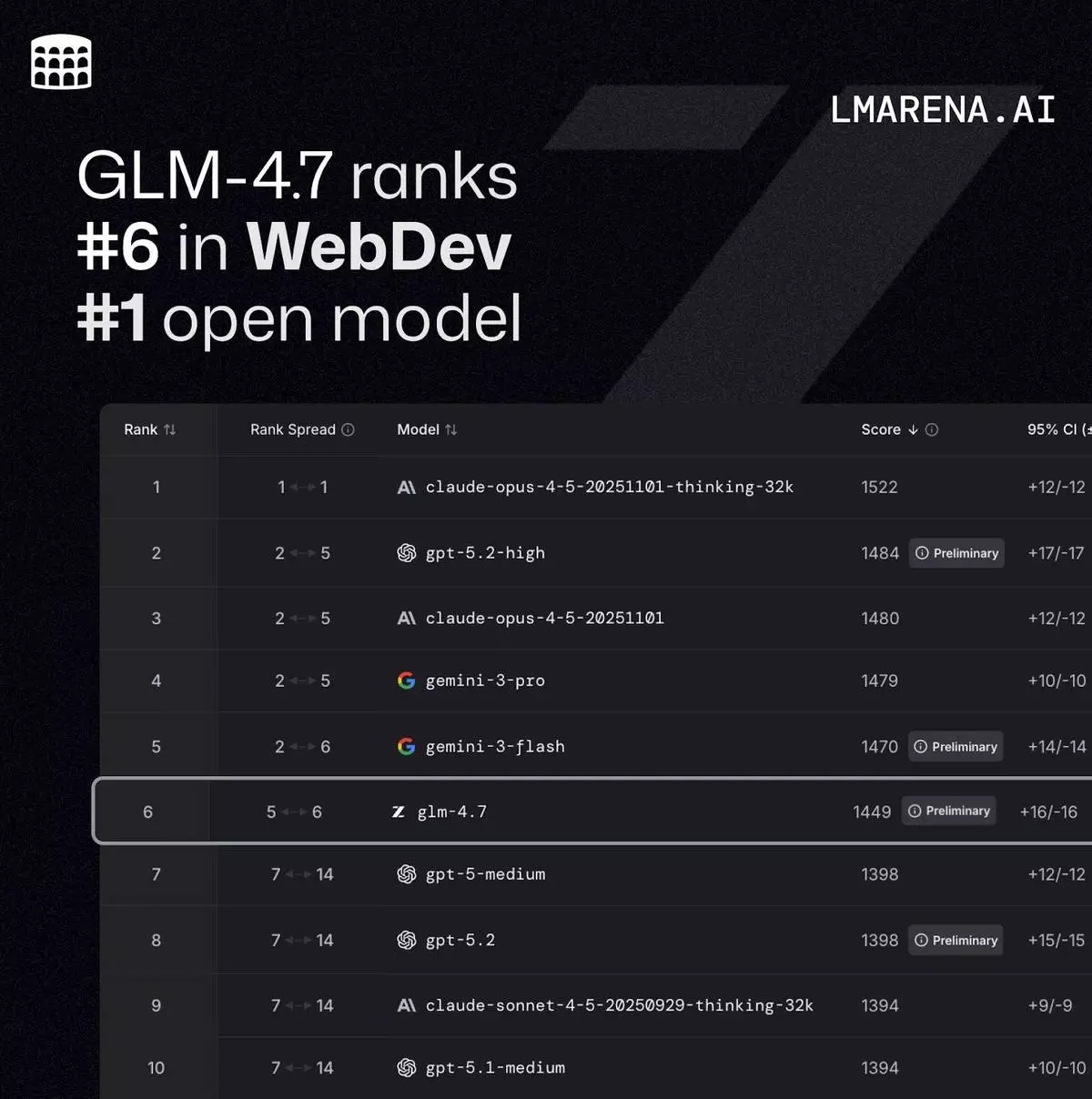

On Code Arena, a large-scale blind evaluation platform with more than one million participants, GLM-4.7 ranks first among open-source models and also holds the top position among models developed in China. Z.ai has emerged as a serious contender in the global open-source AI landscape, particularly in areas where reliability in real coding scenarios matters most.

More predictable and controllable reasoning

GLM-4.7 introduces more fine-grained control over how the model reasons through long-running and complex tasks. As artificial-intelligence systems move steadily into production, such capabilities have become an increasing focus for developers. The model is able to maintain consistency in its reasoning across multiple interactions, while also adjusting the depth of reasoning according to task complexity. This makes its behaviour within agent systems more predictable over time.

Whether a model can be deployed reliably at scale has become a central question for teams building production-grade AI. It is a question that Z.ai continues to examine and refine as it develops the GLM series.

Improvements in front-end generation and general capabilities

Beyond functional correctness, GLM-4.7 shows a noticeably more mature understanding of visual structure and established front-end design conventions. In tasks such as generating web pages or presentation materials, the model tends to produce layouts with more consistent spacing, clearer hierarchy, and more coherent styling, reducing the need for manual adjustment downstream.

At the same time, improvements in conversational quality and writing style have broadened the model’s range of use cases. These changes make GLM-4.7 more adaptable to creative and interactive applications, extending its role beyond purely engineering-focused scenarios.

Ecosystem integration and open access

GLM-4.7 is available via the BigModel.cn API and is fully integrated into the z.ai full-stack development environment. Developers and partners across the global ecosystem have already incorporated the GLM Coding Plan into their tools, including platforms such as TRAE, Cerebras, YouWare, Vercel, OpenRouter and CodeBuddy. Adoption across developer tools, infrastructure providers and application platforms suggests that GLM-4.7 is beginning to move beyond research settings and into wider engineering and product use.

With GLM-4.7, Z.ai continues its long-standing approach to open-source large language models: building systems that can be used reliably in real projects. The team aims to make advanced AI more practical and dependable for developers and enterprises worldwide. As open-source models take on a more prominent role in the global technology ecosystem, Z.ai’s progress offers a clear indication of how such systems may continue to evolve, and what they might enable next.

Default Model for Coding Plan: https://z.ai/subscribe

Try it now: https://chat.z.ai/

Weights: https://huggingface.co/zai-org/GLM-4.7

Technical blog: https://z.ai/blog/glm-4.7

GLM-4.7 ranks #6 in WebDev and is the #1 open model.