|

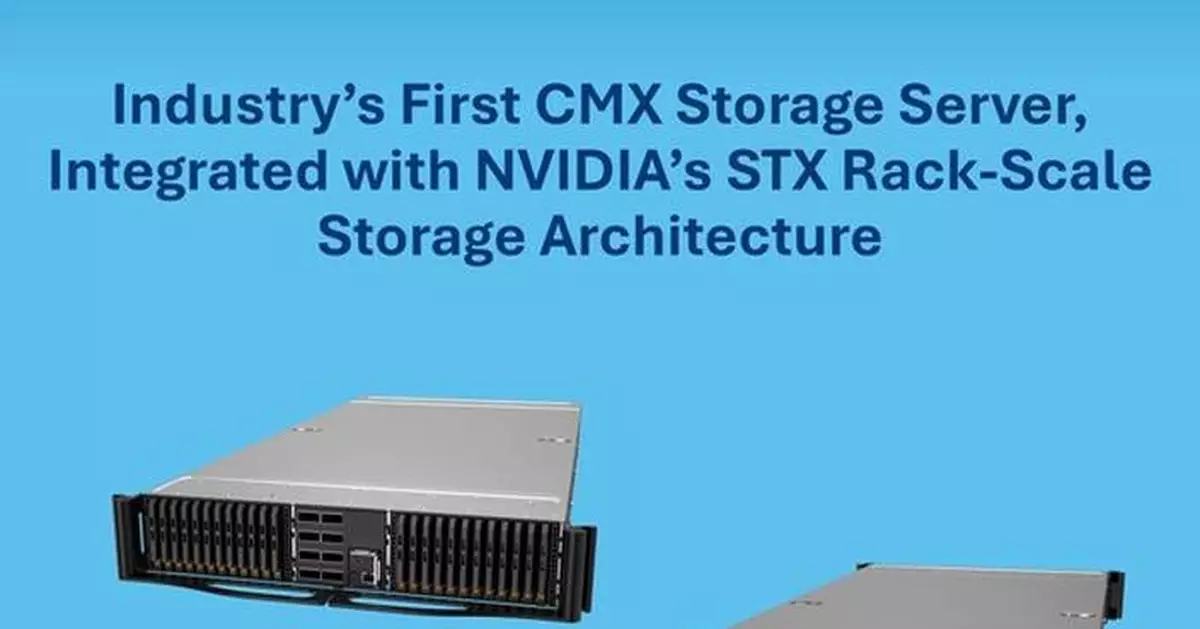

- Supermicro illustrates leadership with one of the first Context Memory (CMX) storage servers, built on the NVIDIA STX reference architecture for AI storage.

- The BlueField-4 STX storage server combines NVIDIA Vera CPU and NVIDIA ConnectX-9 SuperNIC.

- Supermicro's storage server builds upon last year's introduction of the Petascale JBOF all-flash array powered by NVIDIA BlueField-3.

SAN JOSE, Calif., March 18, 2026 /PRNewswire/ -- Supermicro, Inc. (NASDAQ: SMCI), a Total IT Solution Provider for AI, Cloud, Storage, and 5G/Edge, today unveiled one of the industry's first context memory (CMX) storage server as part of NVIDIA STX reference architecture announced at NVIDIA GTC 2026. STX is a new modular reference architecture from NVIDIA which is designed to accelerate the full lifecycle of AI.

"Supermicro continues to be first to market with new rack scale architectures designed to exceed the needs of a rapidly evolving AI Factory customer base," said Charles Liang, president and CEO of Supermicro. "Building upon last year's introduction of the Petascale JBOF (Just a Bunch of Flash), where we proved the feasibility of a JBOF powered by NVIDIA BlueField-3 DPUs, we have developed the CMX storage server. Our prototype of the latest storage architecture demonstrates the level of our collaboration with NVIDIA, and our commitment to be first-to-market with game changing technologies."

For more information about the new Supermicro storage server built on the NVIDIA STX reference architecture please visit: www.supermicro.com/en/solutions/ai-storage

Leveraging the STX architecture, the CMX server is designed to address the challenge of long-lived AI queries and multi-stage chain-of-thought agentic workloads, which require the prior and intermediate tokens associated with the user's query to be accessed. This solution both accelerates the results and reduces the power which would otherwise be required to recompute the results when the local storage required for the tokens is exceeded. This storage of tokens, called Key Value (KV) cache, is managed by NVIDIA Dynamo, NVIDIA's inference orchestration layer.

As the STX solution comes to market, Supermicro will be working with these software partners and others on porting and validation. Additionally, Supermicro long-standing relationships with leading SSD providers such as Micron, Samsung, Phison, and others will enable testing for the specific STX architecture requirements.

At GTC 2026, Supermicro also announced seven AI Data Platform solutions based on the RTX PRO 6000 Blackwell Server Edition GPU with NVIDIA and storage partners such as Cloudian, DDN, Everpure (formerly Pure Storage), IBM, Nutanix, VAST Data, and WEKA. The AI Data Platform enables enterprises to process their data for AI workloads. The CMX server is being shown in Supermicro booth #1113, and at the NVIDIA exhibit, at the NVIDIA GTC 2026 March 16-19.

About Super Micro Computer, Inc.

Supermicro (NASDAQ: SMCI) is a global leader in Application-Optimized Total IT Solutions. Founded and operating in San Jose, California, Supermicro is committed to delivering first-to-market innovation for Enterprise, Cloud, AI, and 5G Telco/Edge IT Infrastructure. We are a Total IT Solutions provider with server, AI, storage, IoT, switch systems, software, and support services. Supermicro's motherboard, power, and chassis design expertise further enables our development and production, enabling next-generation innovation from cloud to edge for our global customers. Our products are designed and manufactured in-house (in the US, Asia, and the Netherlands), leveraging global operations for scale and efficiency and optimized to improve TCO and reduce environmental impact (Green Computing). The award-winning portfolio of Server Building Block Solutions® allows customers to optimize for their exact workload and application by selecting from a broad family of systems built from our flexible and reusable building blocks that support a comprehensive set of form factors, processors, memory, GPUs, storage, networking, power, and cooling solutions (air-conditioned, free air cooling or liquid cooling).

Supermicro, Server Building Block Solutions, and We Keep IT Green are trademarks and/or registered trademarks of Super Micro Computer, Inc.

All other brands, names, and trademarks are the property of their respective owners.

- Supermicro illustrates leadership with one of the first Context Memory (CMX) storage servers, built on the NVIDIA STX reference architecture for AI storage.

- The BlueField-4 STX storage server combines NVIDIA Vera CPU and NVIDIA ConnectX-9 SuperNIC.

- Supermicro's storage server builds upon last year's introduction of the Petascale JBOF all-flash array powered by NVIDIA BlueField-3.

SAN JOSE, Calif., March 18, 2026 /PRNewswire/ -- Supermicro, Inc. (NASDAQ: SMCI), a Total IT Solution Provider for AI, Cloud, Storage, and 5G/Edge, today unveiled one of the industry's first context memory (CMX) storage server as part of NVIDIA STX reference architecture announced at NVIDIA GTC 2026. STX is a new modular reference architecture from NVIDIA which is designed to accelerate the full lifecycle of AI.

"Supermicro continues to be first to market with new rack scale architectures designed to exceed the needs of a rapidly evolving AI Factory customer base," said Charles Liang, president and CEO of Supermicro. "Building upon last year's introduction of the Petascale JBOF (Just a Bunch of Flash), where we proved the feasibility of a JBOF powered by NVIDIA BlueField-3 DPUs, we have developed the CMX storage server. Our prototype of the latest storage architecture demonstrates the level of our collaboration with NVIDIA, and our commitment to be first-to-market with game changing technologies."

For more information about the new Supermicro storage server built on the NVIDIA STX reference architecture please visit: www.supermicro.com/en/solutions/ai-storage

Leveraging the STX architecture, the CMX server is designed to address the challenge of long-lived AI queries and multi-stage chain-of-thought agentic workloads, which require the prior and intermediate tokens associated with the user's query to be accessed. This solution both accelerates the results and reduces the power which would otherwise be required to recompute the results when the local storage required for the tokens is exceeded. This storage of tokens, called Key Value (KV) cache, is managed by NVIDIA Dynamo, NVIDIA's inference orchestration layer.

As the STX solution comes to market, Supermicro will be working with these software partners and others on porting and validation. Additionally, Supermicro long-standing relationships with leading SSD providers such as Micron, Samsung, Phison, and others will enable testing for the specific STX architecture requirements.

At GTC 2026, Supermicro also announced seven AI Data Platform solutions based on the RTX PRO 6000 Blackwell Server Edition GPU with NVIDIA and storage partners such as Cloudian, DDN, Everpure (formerly Pure Storage), IBM, Nutanix, VAST Data, and WEKA. The AI Data Platform enables enterprises to process their data for AI workloads. The CMX server is being shown in Supermicro booth #1113, and at the NVIDIA exhibit, at the NVIDIA GTC 2026 March 16-19.

About Super Micro Computer, Inc.

Supermicro (NASDAQ: SMCI) is a global leader in Application-Optimized Total IT Solutions. Founded and operating in San Jose, California, Supermicro is committed to delivering first-to-market innovation for Enterprise, Cloud, AI, and 5G Telco/Edge IT Infrastructure. We are a Total IT Solutions provider with server, AI, storage, IoT, switch systems, software, and support services. Supermicro's motherboard, power, and chassis design expertise further enables our development and production, enabling next-generation innovation from cloud to edge for our global customers. Our products are designed and manufactured in-house (in the US, Asia, and the Netherlands), leveraging global operations for scale and efficiency and optimized to improve TCO and reduce environmental impact (Green Computing). The award-winning portfolio of Server Building Block Solutions® allows customers to optimize for their exact workload and application by selecting from a broad family of systems built from our flexible and reusable building blocks that support a comprehensive set of form factors, processors, memory, GPUs, storage, networking, power, and cooling solutions (air-conditioned, free air cooling or liquid cooling).

Supermicro, Server Building Block Solutions, and We Keep IT Green are trademarks and/or registered trademarks of Super Micro Computer, Inc.

All other brands, names, and trademarks are the property of their respective owners.

** This press release is distributed by PR Newswire through automated distribution system, for which the client assumes full responsibility. **

Supermicro Among First to Unveil NVIDIA BlueField-4 STX Storage Server to Improve AI Inference Performance

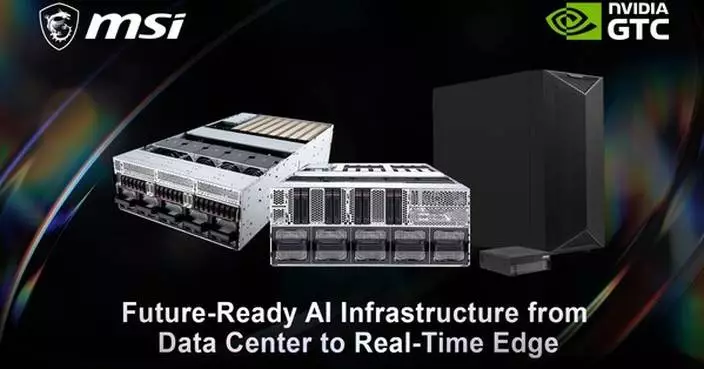

Empowering Enterprises to Deploy Megawatt-Scale AI Data Centers based on NVIDIA MGX

| News Summary LITEON Technology will showcase next-gen AI data center solutions at NVIDIA GTC 2026, including solutions for the NVIDIA Vera Rubin platform and 800 VDC power rack architecture, 110 kW Power Shelf, liquid cooling systems and racks based on NVIDIA MGX, and 2.1 MW in-row CDU. LITEON's 800 VDC solution integrates high-efficiency power modules, DC power distribution, and system-level energy management to meet the dynamic load demands of AI servers, while enhancing liquid-cooling and thermal management flexibility and improving overall operational efficiency. LITEON will continue to expand its collaboration with NVIDIA to advance high voltage DC power architecture, power conversion, mechanical design, and liquid cooling integration to meet the growing demands for energy efficiency, power density, and resilience in the AI era. |

News Summary

LITEON Technology will showcase next-gen AI data center solutions at NVIDIA GTC 2026, including solutions for the NVIDIA Vera Rubin platform and 800 VDC power rack architecture, 110 kW Power Shelf, liquid cooling systems and racks based on NVIDIA MGX, and 2.1 MW in-row CDU. LITEON's 800 VDC solution integrates high-efficiency power modules, DC power distribution, and system-level energy management to meet the dynamic load demands of AI servers, while enhancing liquid-cooling and thermal management flexibility and improving overall operational efficiency. LITEON will continue to expand its collaboration with NVIDIA to advance high voltage DC power architecture, power conversion, mechanical design, and liquid cooling integration to meet the growing demands for energy efficiency, power density, and resilience in the AI era.

SAN JOSE, Calif., March 18, 2026 /PRNewswire/ -- LITEON Technology (2301.tw) participates in NVIDIA GTC 2026 from March 16 to 19, unveiling a comprehensive portfolio of next-generation AI data center solutions. The showcase features solutions designed for NVIDIA Vera Rubin platform, including high-efficiency power systems based on next-generation architectures, advanced rack systems, and liquid cooling technologies. Key highlights include the 800 VDC Power Rack, 110 kW Power Shelf, liquid-cooling systems and racks based on NVIDIA MGX, 2.1 MW in-row CDU, and power bricks. These offerings are designed to accelerate customers' deployment of megawatt-scale AI data centers and address the increasing demands on compute performance and energy management in the AI era.

As AI workloads drive rapid increases in rack power density, data-center power architectures are undergoing structural transformation. Traditional power-shelf architectures face efficiency and current-handling limitations when supporting megawatt-scale AI clusters. In an 800 VDC environment, power architectures are gradually shifting toward a power rack configuration. By increasing system voltage and reducing current load, this approach fundamentally improves power distribution efficiency and overcomes limitations in power density. This evolution represents not only a power-system upgrade, but also a re-architecture of data-center infrastructure.

LITEON's 800 VDC solution integrates high-efficiency power modules, DC distribution designs, and system-level energy-management capabilities. It supports the stringent dynamic-load requirements of AI servers and accelerated computing platforms while enabling greater flexibility for liquid-cooling and thermal-management systems. The high-voltage architecture simplifies the power hierarchy inside data centers and enhances deployment speed and long-term operational efficiency.

"AI data centers are entering a critical phase where power and thermal systems must be re‑architected together," said John Chang, General Manager of LITEON Cloud Infrastructure Platform and Solution SBG. "Power systems are no longer merely supporting functions; they are becoming one of the core elements of data‑center. Through high‑voltage DC designs, we help customers achieve optimal balance among power density, energy efficiency, and infrastructure costs."

As AI computing platforms rapidly expand, LITEON will continue deepening collaboration with ecosystem partners and advancing power-optimization technologies for high-performance GPUs and AI‑accelerated systems. Through close collaboration with NVIDIA in high-performance computing (HPC) and AI infrastructure, LITEON is accelerating its development of next-generation integrated data-center solutions, covering 800 VDC, high-efficiency power conversion, mechanical design, and liquid-cooling integration. These efforts address the structural requirements for high energy efficiency, high power density, and operational resilience in the AI era.

For more information, please visit: https://www.liteon.com/zh-tw/solutions/green-data-center.

LITEON Technology at 2026 GTC:

Date: March 16–19, 2026

Venue: San Jose McEnery Convention Center, USA

Booth: 635

** This press release is distributed by PR Newswire through automated distribution system, for which the client assumes full responsibility. **

LITEON Showcases Next-Generation 800 VDC and NVIDIA Vera Rubin Platform Solutions at NVIDIA GTC 2026