GONZALES, Texas, March 17, 2026 /PRNewswire/ -- As the U.S. cattle herd falls to a 75-year low and beef prices climb to record highs, Texas ranchers are under growing pressure to protect every acre of usable pasture. Yet invasive brush continues to spread, and conventional control methods often prove too costly, too imprecise or too limited by terrain. Agricultural drones are changing that equation, offering a more practical way to deliver herbicide treatments in dense brush and hard-to-reach areas without damaging the soil or grass underneath.

When the Brush Takes Over

That challenge is creating growing demand for brush control specialists like Curtis Schramm, owner of Texas Agridrone Services. He operates in Gonzales County, a Texas cattle region where livestock accounts for 93 percent of agricultural sales and crop rows make up just 10 percent of farmland. There, brush encroachment is visible and relentless. According to Texas A&M research, a single adult mesquite tree can consume up to 20 gallons of water per day during peak growing season, while prickly pear density can increase 25 to 30 percent each year during prolonged drought. In a region that depends almost entirely on rainfall, that kind of pressure steadily shrinks the amount of usable pasture available to ranchers.

The traditional answers work only when the economics align. In Schramm's experience, a 29-acre brushy pasture is too small for a half-million-dollar ground rig to bother with. A helicopter can cover hundreds of acres an hour but won't spray precisely over a dense thicket. And shredding with a tractor makes things worse: it stresses the plants, accelerates regrowth, and leaves fields full of stumps that prevent other equipment from entering afterward.

"A lot of my work is a fix to poor management practices from decades, even lifetimes of ranchers and landowners mismanaging property," says Schramm, "Letting native brush and other invasive species come in and take over."

A Different Kind of Precision

Brush work comes with a different set of demands than open-field spraying. Schramm's jobs involve dense, irregularly shaped thickets, damaged terrain, and pastures laced with electrical lines. Although agricultural spray drones are built to handle a range of priorities, he knows that penetration and precision matter most for his work. "What good would a wide swath do me if it didn't have the force to push the chemical all the way down through the canopy?" he says.

He chose the XAG P150, starting operations in August 2025. The quad-rotor design generates a strong, concentrated downdraft, and paired with the RevoSpray System, it reaches a maximum flow rate of 7.9 gallons per minute (30 liters per minute), pushing herbicide uniformly through canopy layers to the understory below. When Schramm hovers over a dense mesquite stand, landowners expect the spray to drift down gently, like rain under a tree. What they see instead surprises them every time. "When the drone goes over, the plants swirl, the ground's blowing up in the dust, and the chemical reaches every bit of the plant, not just the soil. They're always impressed."

The drone's 4D imaging radar detects obstacles between 5 and 328 feet along the flight path (1.5 to 100 meters), enabling safe operation across the uneven, obstacle-heavy terrain Schramm navigates daily. Real-time 3D terrain mapping enables autonomous flight without a preloaded map, adjusting continuously to each new field's contours. "The way it quickly adjusts... it makes me laugh every time," he says. "That's incredible to me, because I'm thinking about all that math that has to compute to know what the speed, height, and all those variables to make a decision in a millisecond."

The P150's foldable design also lets Schramm load, launch, and manage a full day's operation alone. For a small-business owner in his first years of operation, working solo means lower overhead and more profit. It's also a practical necessity: his jobs are often on properties where larger equipment simply can't reach.

The results have been clear. His first job, a shredder-damaged pasture of mixed mesquite, prickly pear, and huisache, looked one month later like a war zone. "It killed everything but the grass," Schramm says, recalling the landowner calling him out to come see it.

In his first season, August through December 2025, he completed 682 acres of brushwork without losing a single client. For each of those ranchers, recovered pasture means more grass, more cattle weight, and more income — a chain of returns that begins with getting the brush out.

Land, Cattle, and Family

Schramm didn't arrive here through a straight line. He spent decades connected to the land, first through his family's poultry operation for Tyson Foods from 1977 to 2015, and before that through his grandfather, a county conservation resource manager for 31 years who taught young Curtis to identify grasses, understand invasive plants, and know when and how to treat them. "All those techniques I grew up learning turned out to be something I needed when I was in my late 40s and early 50s," he laughs. "Here I am doing this."

For Schramm, the work has always been about more than brush. "If you're in a drought, you need to feed your cattle as cheaply as possible," he says. "The quicker you can put weight on, the more money you can make." He's brought his refill cycle down to under 32 seconds: batteries swapped, tank refilled, drone back in the air in under a minute, because every minute on the ground is a minute not serving that chain. "The idea is to feed your land to feed your cattle to feed your family."

"It's my life now," he says. "When I wake up at 4 in the morning to go somewhere and do something with the drone, I'm super excited. It's been something that's filled my life, and for my family, very well."

** This press release is distributed by PR Newswire through automated distribution system, for which the client assumes full responsibility. **

For Texas Ranchers Fighting Invasive Brush, XAG Drones Are Changing the Odds

For Texas Ranchers Fighting Invasive Brush, XAG Drones Are Changing the Odds

|

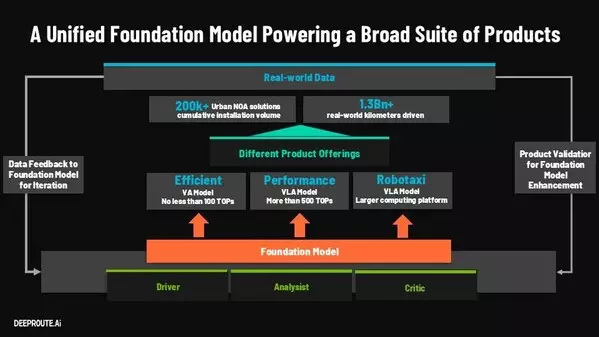

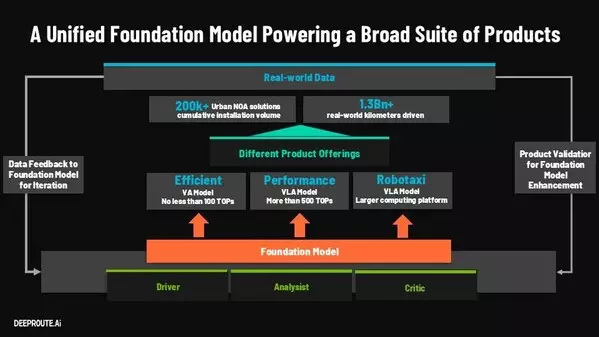

SAN JOSE, Calif., March 17, 2026 /PRNewswire/ -- At NVIDIA GTC 2026, DeepRoute.ai presented a comprehensive introduction to its 40-billion-parameter Vision-Language-Action (VLA) Foundation Model architecture, representing a fundamental breakthrough in autonomous driving development. The model introduces a unified architecture that integrates perception, reasoning, and action, enabling systems not only to drive, but to understand and evaluate their own decision-making in real time.

DeepRoute.ai has already achieved significant commercial success, having delivered its advanced autonomous driving systems across more than 250,000 production vehicles. In October 2025, DeepRoute.ai captured nearly 40% market share among third-party suppliers in the high-level autonomous driving segment for a single month. Building on this momentum and fueled by the continuous evolution of its Foundation Model, the company is targeting deployment of one million vehicles equipped with its advanced driving solutions by the end of 2026.

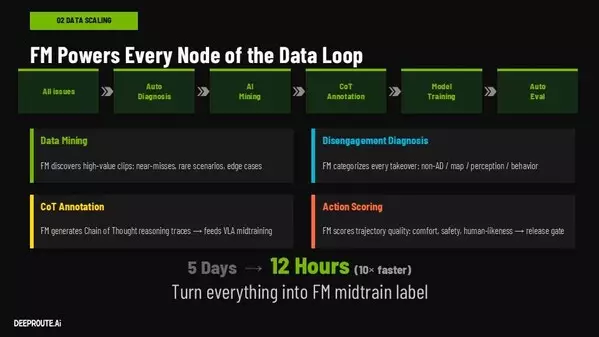

Breaking the Bottleneck: From Days to Hours

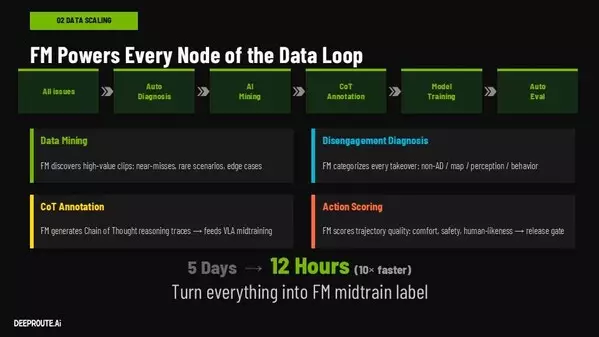

Autonomous driving development has long been hampered by the inefficiencies of traditional "data closed-loop" workflows. In conventional systems, data must be manually collected, reviewed, annotated, and retrained—a process that typically requires more than five days per iteration. Meanwhile, companies accumulate vast volumes of raw driving data, most consisting of routine scenarios that offer limited training value and can even degrade model performance.

"At its core, autonomous driving is a scaling problem," said Tongyi Cao, CTO of DeepRoute.ai. "While the industry has made significant progress, true large-scale deployment remains elusive because traditional execution paths are flawed. The bottleneck is no longer about acquiring data; it is about how efficiently a system can filter out the noise and convert massive amounts of raw data into high-value training samples."

DeepRoute.ai's solution: compress the data processing cycle from over five days to approximately 12 hours through intelligent automation.

One Model, Three Roles: Driver, Analyst, and Critic

The 40B VLA Foundation Model performs three complementary functions simultaneously:

The Driver – Executes real-time driving actions based on visual inputs

The Analyst – Identifies critical driving events and explains decisions through causal reasoning

The Critic – Evaluates trajectories for safety, comfort, and human-like behavior

"Our solution to the industry's scaling bottleneck is a unified, 40-billion parameter Vision-Language-Action Foundation Model," Cao explained. "This model goes beyond basic vehicle control. It possesses the capability to analyze data and evaluate driving behavior. Simply put, this model serves not only as the 'driver,' but simultaneously as the 'analyst' and the 'critic.'"

By embedding these capabilities within a single foundation model, DeepRoute.ai has automated large portions of the data pipeline. The system autonomously identifies high-value events such as near-misses and rare scenarios, performs root-cause analysis, and generates reasoning annotations, all without manual intervention.

A Self-Evolving Data Flywheel

The architecture enables a self-reinforcing development cycle where improvements in driving performance directly enhance the system's ability to process and curate its own training data.

"Traditional data closed loops are highly dependent on manual human processes, which severely limits iteration speed," said Cao. "By leveraging our Foundation Model, we have entirely reconstructed this workflow. The model autonomously handles data mining, reason diagnosis, and behavior scoring. Every single iteration of this workflow compounds directly into a measurable enhancement of our AI capabilities."

This self-evolving flywheel accelerates capability growth while dramatically reducing reliance on manual labeling.

Scale and Momentum: 250K to 1M Vehicles

"By the end of 2025, we successfully delivered over 250,000 mass-produced vehicles equipped with DeepRoute.ai's autonomous driving systems," Cao said. "The Foundation Model serves as the core cornerstone for DeepRoute.ai's next-generation autonomous driving assistance and functions as a fundamental AI framework for the physical world. This unified architecture enables the system to go beyond mere execution; it understands complex traffic environments, explains the underlying logic of its decisions, and evaluates driving behaviors. This evolution provides its autonomous driving systems with more comprehensive cognitive and decision-making capabilities."

Through its presentation at GTC 2026, DeepRoute.ai demonstrated how its 40B Vision-Language-Action Foundation Model architecture is accelerating the path to scalable, safe autonomous driving through continuous, data-driven learning and rapid iteration cycles.

About DeepRoute.ai

DeepRoute.ai is a leading artificial intelligence company developing advanced autonomous driving systems. Driven by the vision of achieving Artificial General Intelligence (AGI) for the physical world, the company leverages state-of-the-art foundation models to deliver highly reliable, safety-first autonomous driving solutions. Backed by top-tier investors with over $700 million in funding, DeepRoute.ai has successfully deployed its systems across more than 200,000 mass-produced consumer vehicles. By prioritizing scalable and innovative smart mobility, the company is establishing a robust foundation to pioneer the future of commercial Robotaxi operations.

SAN JOSE, Calif., March 17, 2026 /PRNewswire/ -- At NVIDIA GTC 2026, DeepRoute.ai presented a comprehensive introduction to its 40-billion-parameter Vision-Language-Action (VLA) Foundation Model architecture, representing a fundamental breakthrough in autonomous driving development. The model introduces a unified architecture that integrates perception, reasoning, and action, enabling systems not only to drive, but to understand and evaluate their own decision-making in real time.

DeepRoute.ai has already achieved significant commercial success, having delivered its advanced autonomous driving systems across more than 250,000 production vehicles. In October 2025, DeepRoute.ai captured nearly 40% market share among third-party suppliers in the high-level autonomous driving segment for a single month. Building on this momentum and fueled by the continuous evolution of its Foundation Model, the company is targeting deployment of one million vehicles equipped with its advanced driving solutions by the end of 2026.

Breaking the Bottleneck: From Days to Hours

Autonomous driving development has long been hampered by the inefficiencies of traditional "data closed-loop" workflows. In conventional systems, data must be manually collected, reviewed, annotated, and retrained—a process that typically requires more than five days per iteration. Meanwhile, companies accumulate vast volumes of raw driving data, most consisting of routine scenarios that offer limited training value and can even degrade model performance.

"At its core, autonomous driving is a scaling problem," said Tongyi Cao, CTO of DeepRoute.ai. "While the industry has made significant progress, true large-scale deployment remains elusive because traditional execution paths are flawed. The bottleneck is no longer about acquiring data; it is about how efficiently a system can filter out the noise and convert massive amounts of raw data into high-value training samples."

DeepRoute.ai's solution: compress the data processing cycle from over five days to approximately 12 hours through intelligent automation.

One Model, Three Roles: Driver, Analyst, and Critic

The 40B VLA Foundation Model performs three complementary functions simultaneously:

The Driver – Executes real-time driving actions based on visual inputs

The Analyst – Identifies critical driving events and explains decisions through causal reasoning

The Critic – Evaluates trajectories for safety, comfort, and human-like behavior

"Our solution to the industry's scaling bottleneck is a unified, 40-billion parameter Vision-Language-Action Foundation Model," Cao explained. "This model goes beyond basic vehicle control. It possesses the capability to analyze data and evaluate driving behavior. Simply put, this model serves not only as the 'driver,' but simultaneously as the 'analyst' and the 'critic.'"

By embedding these capabilities within a single foundation model, DeepRoute.ai has automated large portions of the data pipeline. The system autonomously identifies high-value events such as near-misses and rare scenarios, performs root-cause analysis, and generates reasoning annotations, all without manual intervention.

A Self-Evolving Data Flywheel

The architecture enables a self-reinforcing development cycle where improvements in driving performance directly enhance the system's ability to process and curate its own training data.

"Traditional data closed loops are highly dependent on manual human processes, which severely limits iteration speed," said Cao. "By leveraging our Foundation Model, we have entirely reconstructed this workflow. The model autonomously handles data mining, reason diagnosis, and behavior scoring. Every single iteration of this workflow compounds directly into a measurable enhancement of our AI capabilities."

This self-evolving flywheel accelerates capability growth while dramatically reducing reliance on manual labeling.

Scale and Momentum: 250K to 1M Vehicles

"By the end of 2025, we successfully delivered over 250,000 mass-produced vehicles equipped with DeepRoute.ai's autonomous driving systems," Cao said. "The Foundation Model serves as the core cornerstone for DeepRoute.ai's next-generation autonomous driving assistance and functions as a fundamental AI framework for the physical world. This unified architecture enables the system to go beyond mere execution; it understands complex traffic environments, explains the underlying logic of its decisions, and evaluates driving behaviors. This evolution provides its autonomous driving systems with more comprehensive cognitive and decision-making capabilities."

Through its presentation at GTC 2026, DeepRoute.ai demonstrated how its 40B Vision-Language-Action Foundation Model architecture is accelerating the path to scalable, safe autonomous driving through continuous, data-driven learning and rapid iteration cycles.

About DeepRoute.ai

DeepRoute.ai is a leading artificial intelligence company developing advanced autonomous driving systems. Driven by the vision of achieving Artificial General Intelligence (AGI) for the physical world, the company leverages state-of-the-art foundation models to deliver highly reliable, safety-first autonomous driving solutions. Backed by top-tier investors with over $700 million in funding, DeepRoute.ai has successfully deployed its systems across more than 200,000 mass-produced consumer vehicles. By prioritizing scalable and innovative smart mobility, the company is establishing a robust foundation to pioneer the future of commercial Robotaxi operations.

** This press release is distributed by PR Newswire through automated distribution system, for which the client assumes full responsibility. **

DeepRoute.ai Presents 40B Vision-Language-Action Foundation Model at NVIDIA GTC 2026, Accelerating Autonomous Driving at Scale

DeepRoute.ai Presents 40B Vision-Language-Action Foundation Model at NVIDIA GTC 2026, Accelerating Autonomous Driving at Scale

DeepRoute.ai Presents 40B Vision-Language-Action Foundation Model at NVIDIA GTC 2026, Accelerating Autonomous Driving at Scale

DeepRoute.ai Presents 40B Vision-Language-Action Foundation Model at NVIDIA GTC 2026, Accelerating Autonomous Driving at Scale