SAN JOSE, Calif., March 18, 2026 /PRNewswire/ -- MSI, a global leader in high-performance server solutions and Edge AI, unveils its comprehensive AI ecosystem at NVIDIA GTC 2026. In addition to launching the servers based on NVIDIA MGX architecture and powered by the NVIDIA Blackwell GPUs, MSI introduces the XpertStation WS300, built on NVIDIA DGX Station architecture. MSI also showcases the OmniGuard smart patrol vehicle, integrated with NVIDIA Alpamayo-R1 Vision-Language-Action (VLA) inference model, demonstrating a complete workflow from AI infrastructure and Digital Twin validation to real-world deployment.

High-Performance Foundations: MSI servers based on NVIDIA MGX

Based on the modular design of NVIDIA MGX architecture, MSI has engineered a robust portfolio of 4U and 6U liquid-cooled servers supporting NVIDIA RTX PRO 6000 Blackwell Server Edition and NVIDIA RTX PRO 4500 Blackwell Server Edition GPUs. These platforms are optimized to accelerate a wide range of AI workloads, from data center deployments to edge applications. This flexible architecture empowers enterprises to tailor CPU, memory, and networking configurations to meet specific performance, scalability, and workload demands.

- CG480-S5063: A flagship 4U server based on NVIDIA MGX, featuring dual Intel® Xeon® 6 processors, eight dual-width PCIe GPU slots, and 32 DDR5 DIMM slots for exceptional memory scalability. It supports up to 20 PCIe Gen5 E1.S NVMe drives for ultra-high data throughput.

- CG481-S6053: Powered by dual AMD EPYC™ 9005 Series processors to maximize core density and I/O bandwidth. It integrates eight PCIe 5.0 GPU slots, 24 DDR5 DIMM slots and eight 400G Ethernet ports via NVIDIA ConnectX-8 SuperNICs, designed for compute-intensive AI clusters and HPC simulations.

- CG681-S6093: The 6U liquid-cooled AI platform, designed with a dual-socket architecture and equipped with eight dual-width PCIe GPUs, integrates eight 400G Ethernet ports via NVIDIA ConnectX-8 SuperNICs to deliver exceptional performance and efficiency for high-density AI data center deployments.

Advanced Thermal Engineering and Future-Ready AI Innovation

MSI's platforms based on NVIDIA MGX integrate liquid cooling and optimized air-cooling designs to sustain Peak Performance under the most rigorous AI workloads. Leveraging exceptional GPU throughput and high-speed connectivity, MSI is demonstrating AI-powered intelligent video search and automated summarization. These capabilities optimize multi-camera analytics for smart cities and industrial inspection, empowering enterprises to rapidly extract actionable intelligence from massive datasets to enhance decision-making efficiency.

To reinforce its commitment to next-generation AI computing, MSI is also showcasing NVIDIA Vera CPU option, highlighting its ongoing innovation in data-driven, AI-powered infrastructure solutions.

MSI XpertStation WS300: Data Center-Class AI Power at the Desk

For researchers requiring massive compute power in a deskside form factor, MSI announced the XpertStation WS300, available starting March 16.

- Core Architecture: Built on NVIDIA DGX Station architecture, the WS300 is powered by NVIDIA Grace Blackwell Ultra Desktop Superchip and features 748GB of coherent memory.

- Connectivity and Power: Equipped with dual 400GbE ports via NVIDIA ConnectX-8 and a 1600W ATX power supply, the system delivers unprecedented AI acceleration directly at the desktop with a plug-and-play supercomputing feature.

"The growth of Generative AI and LLMs has driven extreme demand for underlying infrastructure," said Danny Hsu, General Manager of Enterprise Platform Solutions at MSI. "Through our platforms based on NVIDIA MGX and XpertStation WS300, MSI is extending data center-level momentum to the developer's desk, accelerating innovation across the data center, the edge, and the desktop."

Real-World Impact: Reducing Deployment Risk with Digital Twins & EdgeXpert

MSI utilizes NVIDIA Omniverse libraries and NVIDIA Isaac Sim open simulation framework to create high-precision virtual environments to eliminate uncertainties in physical deployments. Through Sim2Real (Simulation to Reality) technology, OmniGuard patrol vehicle underwent rigorous virtual testing of patrol routes and pedestrian interactions to ensure functional validation before real-world implementation.

- Core Intelligence: NVIDIA Alpamayo-R1 Autonomous Decision System

OmniGuard deeply integrates the Alpamayo, granting the vehicle "thought-and-action" perception to master complex Long-tail scenarios. Testing confirms a 12% increase in navigation planning accuracy and a 35% reduction in off-road rates.

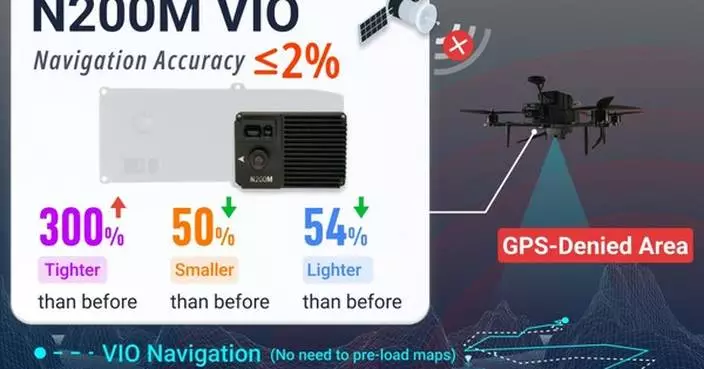

- Scalable Edge AI Implementation:

Models are deployed and monitored via the MSI EdgeXpert platform. The vehicle powered by the NVIDIA Jetson platform for real-time edge processing, ensuring reliable performance in dynamic environments. This architecture can be rapidly extended to smart factories, logistics parks, and public infrastructure.

David Wu, General Manager of Customized Product Solutions at MSI, commented: "The value of AI lies in solving real-world pain points. Powered by NVIDIA accelerated computing, Digital Twins, and Alpamayo inference technology, MSI has established a seamless workflow from virtual validation to physical deployment. This not only shortens development cycles but also demonstrates MSI's strength in driving the comprehensive implementation of Edge AI applications."

** This press release is distributed by PR Newswire through automated distribution system, for which the client assumes full responsibility. **

GTC 2026: MSI Drives End-to-End AI Implementation by Bridging Cloud Computing and Autonomous Edge Inspection