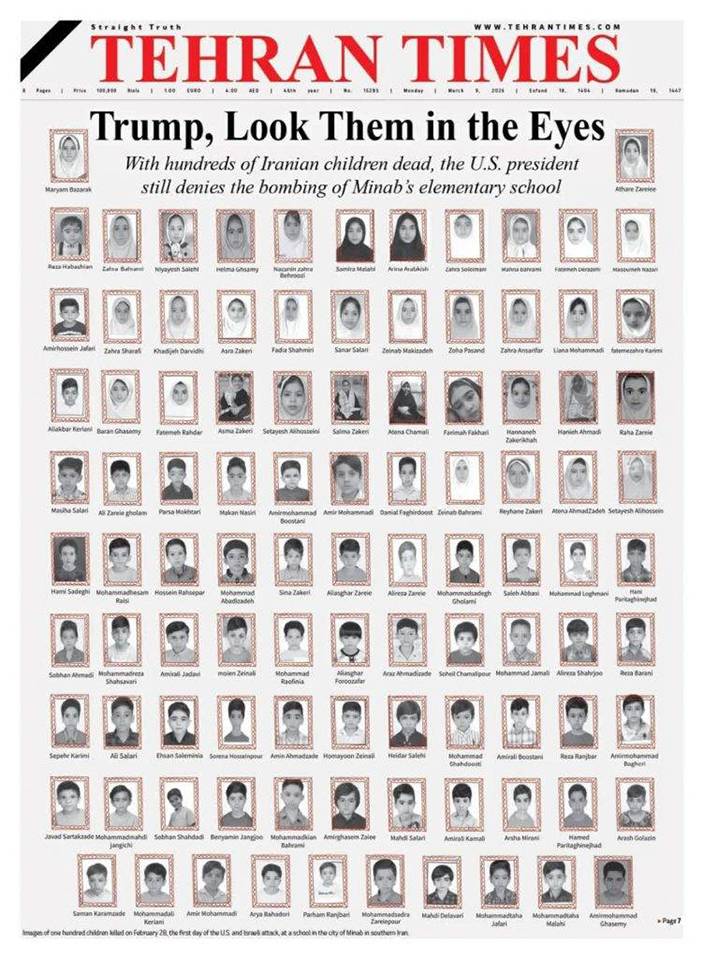

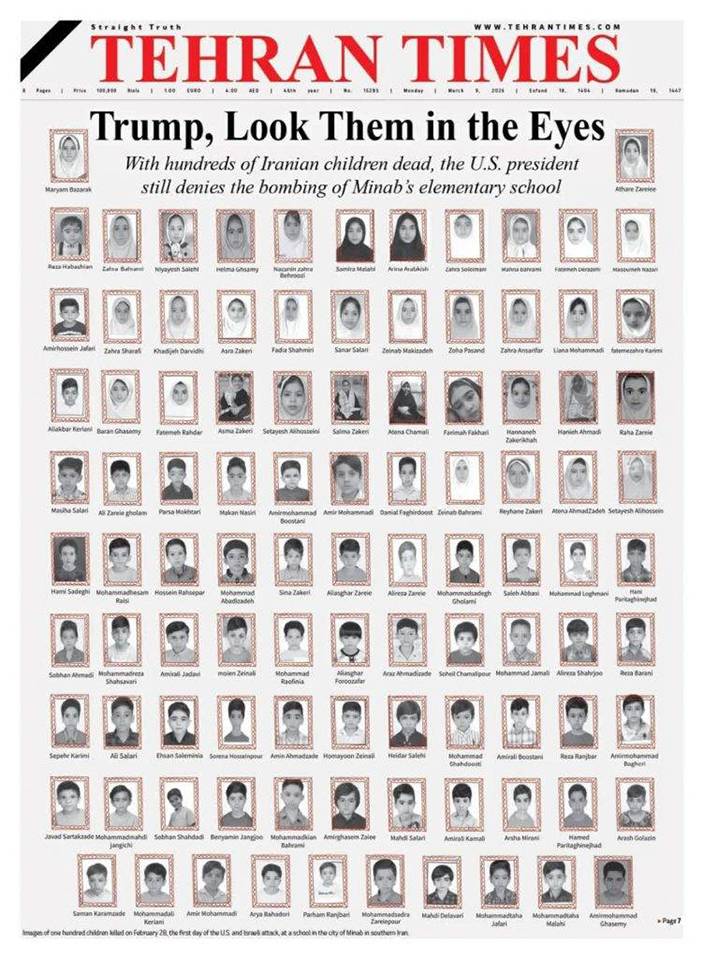

Call it a jaw-dropping case of tech-enabled tragedy. The US military — long boasting of the world's most precise intelligence and the strictest rules of engagement — somehow paired a map that may be older than elementary school students with the most cutting-edge AI system money can buy. The result: a Tomahawk missile delivered straight into a girls' school in Iran, packed with children.

As 165 innocent lives were consumed by flames, Washington's script flipped fast. The iron-clad claim of "we never target civilians" gave way to a fresh pair of deflections: "outdated intelligence" and "AI might be responsible." The whole absurd production —co-starring aging errors and fresh prevarications, perfectly interprets the American style double standard.

When AI Aces the Wrong Test

According to reports in both The Washington Post and The New York Times, the girls' school in Minab, Iran had been separated from the neighboring naval base by a wall since 2015 — painted pink and blue, fitted with a sports field. Yet in the US military's target database, it was still labeled a "military facility." That decade-old antique intelligence, never re-verified amid the rapid-fire pace of US-Israeli strikes against Iran, was fed directly into the newest AI combat system.

The system at the heart of the disaster was no ordinary tool. Built by combining Palantir's Maven platform with Anthropic's Claude model, it had been praised by American generals as capable of "processing massive data within seconds" and "compressing weeks of planning into real-time decisions." A battlefield revolution, they called it.

Two people familiar with the system told The Washington Post exactly how it operated during preparations for the Iran strike. Maven automatically recommended targets, generated precise coordinates, and ranked them by priority. With Claude integrated, the machine shifted into overdrive — converting weeks of planning into split-second action, with post-strike assessments auto-generated after every hit.

The old computing axiom applies with lethal precision here: garbage in, garbage out. With staggering efficiency, the AI flagged the school's coordinates as a "high-priority target" and passed the recommendation up the chain. Washington insists "the final call was made by humans." The reality is: in a wartime machine running at full throttle, churning through thousands of AI-filtered targets by the minute, that so-called human review was little more than a rubber stamp.

Evasion as Standard Procedure

The truth is: AI did not create this error. It executed human negligence at the speed of light. A system built to cut through the "fog of war" became, instead, a high-efficiency accelerator of tragedy.

The US response that followed ran like a well-rehearsed script — America's "standard procedure" display of its double standard.

Secretary of Defense Pete Hegseth had strutted before the cameras to declare, before the bombing: "Unlike our adversary Iran, we never target civilians." After the bombing, his office passed every core question to US Central Command like a hot potato.

CENTCOM declined all comment, citing "an ongoing investigation."

Trump first bellowed: "In my opinion, based on what I've seen, that was done by Iran…" Then, as mounting evidence pointed to the US, he shrugged with a casual: "I just don’t know enough about it."

From flat-out denial to technical deflection, and finally to the commander-in-chief's practised ignorance — responsibility dissolved entirely. The contrast is blinding. This is the same Washington that thunders with moral certitude whenever another country is accused of "human rights abuses" or "violating international law." The script is numbingly familiar: rigor and accountability are instruments for judging others, never for examining oneself.

If the Roles Were Reversed

Try a thought experiment. If a US or Israeli school were bombed, killing over a hundred children, and the attacking country explained it away with "outdated maps" and "AI-recommended targets," how would the world — especially Washington — react?

The thunderous outrage is easy to predict. "Barbaric act!" "State terrorism!" "A blatant war crime!" The UN Security Council would convene an emergency meeting. The International Criminal Court would open an investigation. Crippling sanctions and diplomatic isolation would arrive swiftly — all in the name of justice. That is precisely what defines a double standard: two completely different faces — a magnifying glass for others' wrongs, a funhouse mirror for its own absolution.

Blood No Jargon Can Wash Out

The most chilling part of this tragedy is not the technical failure itself — such things are not unheard of in war. It is the cold, bureaucratic fluency of the post-crisis response. Human deaths get reduced to "delayed database updates," "AI system limitations," and "accelerated operational tempo." Burned backpacks and shattered childhood dreams are recast as mere "system errors" in the modern machinery of warfare.

While US missiles were slaughtering civilians on foreign soil during Ramadan — blame conveniently offloaded onto "outdated intelligence" and "AI" — Washington's legal teams were simultaneously suing AI firms for daring to impose safety restrictions on military use. That is the pinnacle of double standards: deploying the most advanced technology to commit the most primitive crimes, then hiding behind the most elaborate jargon to escape the simplest moral reckoning.

In the end, neither the rotting old maps, nor the gleaming new AI, nor Washington's ever-evolving vocabulary of blame, can wash the bloodstains from the rubble of that school. Those stains do not mark the failure of technology. They mark the moral collapse of an empire that lost its compass long ago.

Beacon Institute

** 博客文章文責自負,不代表本公司立場 **

Trump has turned crisis management into a kind of dark stand‑up routine. The president who helped light the fuse in the Middle East, triggering a showdown that choked off the Strait of Hormuz, now shrugs on camera and boasts that keeping this energy lifeline open is “an honor,” claiming he is doing China and other countries a favour. Yet just off camera, his closest Asia‑Pacific ally, Japan, is staring into an economic abyss precisely because this artery is being squeezed shut. One self‑styled “saviour” on stage, one shivering “vassal state” in the wings – the contrast is a textbook case of selective blindness in today’s power politics.

Trump’s “Honor” Show

According to Fox News, Trump sat in Florida and fielded a question about the Strait of Hormuz, now effectively blockaded after US‑Israeli strikes on Iran. He showed no hint of remorse for creating the mess, instead striking a saviour pose and vowing to keep the strait open, framing it as a favour to “China and other countries” that rely on this corridor. “We’re really helping China here and other countries because they get a lot of their energy from the straits,” he boasted, before adding with a shrug: “We have a good relationship with China. It’s my honor to do it.”

The truth is Trump is skipping over a basic fact: it was the military action he authorised that triggered Iran’s retaliatory squeeze on the strait in the first place. Dressing up a self‑inflicted crisis as a benevolent favour is the political equivalent of an arsonist claiming it is his “honor” to help fight the blaze – a fire he cannot actually put out.

What really decides whether ships get through Hormuz is not some abstract American umbrella. Iran’s Islamic Revolutionary Guard Corps has explicitly said that vessels belonging to the United States, Israel, European countries and their supporters are barred from the strait. That means Chinese passage is fundamentally a function of Tehran’s calculus about its ties with Beijing – not the product of Trump’s self‑promoted “help.”

Ships Rebranding Themselves “Chinese”

On the water, the behavioural evidence cuts the other way. Instead of basking under US “protection,” a string of non‑Chinese commercial vessels are scrambling to cosplay as “Chinese” just to get through. Media reports say that in the past week at least 10 ships have altered their Automatic Identification System (AIS) entries to tag “Chinese Owner,” “All Chinese Crew” or “Chinese Crew Onboard” in their signal data.

One bulk carrier, tellingly named Iron Maiden, flipped its AIS status to “CHINA OWNER” before making a dash through the strait and got through safely. Another Liberia‑flagged vessel, Sino Ocean, pulled the same trick, broadcasting “CHINA OWNER_ALL CREW” as it threaded the chokepoint. This kind of “flag‑switching for survival” already turned up during the 2023 Red Sea crisis; the Strait of Hormuz is now replaying that same script on a bigger stage.

In other words, Trump’s claim of “helping China” is a mirage. He cannot stop Iran from tightening or loosening its blockade – a blockade his own decisions set in motion – and he cannot plausibly offer blanket naval escort to every commercial ship in line. The real invisible shield for some vessels is China’s neutral, non‑aligned posture in the conflict – the very “fence‑sitting” Trump loves to attack. Those ships suddenly proclaiming “Chinese Owner” are casting a hard‑nosed vote for who actually offers the safer label in a sea of risk.

Japan Panics, Trump Shrugs

What makes Trump’s comments even more jarring is the blind spot baked into his phrase “other countries.” The list clearly does not include the ally now being squeezed the hardest by the strait’s closure: Japan.

Set against Trump’s breezy talk of “honor,” Japan is in full‑blown alarm mode. For Tokyo, the Strait of Hormuz is not just a lane on the map – it is the windpipe of the economy, the channel through which its industrial lifeblood flows.

Japan sources more than 90% of its crude oil from the Middle East, and roughly three‑quarters of that needs to move through the Strait of Hormuz. Around 28% of its liquefied natural gas (LNG) is also routed via this chokepoint, meaning any closure effectively slams shut the artery feeding Japanese industry.

Think of the economic hit as a slow‑motion body blow. Multiple institutions estimate that a prolonged shutdown could shave between 0.65% and 3% off Japan’s GDP, while every 10‑dollar jump in global oil prices adds roughly 1.3 trillion yen – about 8.5 billion US dollars – to its annual crude import bill, swelling the trade deficit and fuelling imported inflation. Tokyo’s stock market has already been hammered by successive sell‑offs as investors price in the shock.

On paper, Japan holds about 254 days of oil reserves, but those barrels are scattered and not easy to deploy at speed. The more immediate danger is on the gas side: LNG inventories only cover something like three weeks of demand, so any prolonged cut‑off threatens rolling blackouts and factory shutdowns.

Industry Feels the Squeeze

Major petrochemical player Idemitsu Kosan has already warned it may have to halt output if naphtha – the key feedstock for ethylene – stops arriving. Japan’s flagship export sectors, from autos to electronics, all guzzle energy, so fuel shortages plus soaring costs will carve away their competitive edge on the global stage.

Prime Minister Sanae Takaichi’s government has rushed to set up an emergency task force, while some refiners are already applying to tap national strategic crude reserves. Chief Cabinet Secretary Minoru Kihara admits the government is “carefully assessing” whether to dispatch Self‑Defence Forces if Washington comes calling – but none of that changes the basic fact on the ground: Japan has been turned into the most immediate economic casualty of a war launched under the US banner.

The Deal, the Pawn, the Spotlight

Trump’s ability to ignore Japan’s distress – while theatrically conferring the “honor” of being helping China – lays bare a brutally transactional America‑First logic.

First is the price tag of The Art of the Deal. In Trump’s deal‑sheet worldview, an ally’s worth depends on how much it can pay up right now. Japan is an old ally, but in his eyes it has fallen short on trade concessions and defence burden‑sharing in recent years. Trump, however, gains more political mileage by talking up how he is “helping China,” posturing as a global leader – however absurd that sounds – and banking the rhetoric as a future bargaining chip with Beijing.

Second is the disposability of strategic pawns. Japan’s security and diplomacy are lashed tightly to Washington, leaving Tokyo almost no room to say no. That asymmetric dependence makes it easy for the United States to shift alliance costs onto Japan – from following anti‑Russia sanctions to doubling down on Middle East energy – without feeling compelled to protect Japanese core interests when a crisis actually hits.

Third is the spotlight of domestic politics. Trump’s talking points are tailored first and foremost for voters at home, not partners abroad. Emphasising that he is “helping China” simultaneously feeds an appetite for “challenging China” and burnishes his image as a strongman in control of chaos, while Japan’s energy nightmare is too remote and too technical to fire up a rally crowd.

Finally comes the tried‑and‑true “maker and solver” routine. Trump is adept at turning problems of his own making into crises only he can supposedly fix. His threat to hit Iran “twenty times harder” is less about shielding allies than flexing firepower and setting the stage for further escalation. Japan’s plight is reduced to background scenery – dim, distant and largely ignored – in this grand performance.

Old Hegemony, New Reality

This may be one of the most ironic tableaus in today’s geopolitics. A hegemon carelessly lights a fire that scorches the follower walking closest behind, then turns to the distant audience and repackages the blaze he cannot control as a “gift” to others.

Make no mistake: this is more than an alliance trust deficit. It is the story of an ageing hegemonic playbook – threaten, escalate, claim credit – colliding head‑on with a reality in which partners pay the price.